This might seem a brave call when talking about cyber-security threat information. But the truth is that the cyber world forces a new paradigm on security. The tools that are familiar in the offline world for providing elements of security, such as obscurity, tend to benefit the attackers rather than the defenders, because the very advantages of the online world, things like search and constant availability are also the online world’s greatest weaknesses. What matters most in the online world is not what you know, but how fast you know and make use of the information you have.

I’ve been reading the Cyber Security Task Force: Public-Private Information Sharing report, and I think its worth promoting what it says. It presents a call to action for government and companies in the US to improve information-sharing to prevent the increasing risks from cyber attacks on organisations, both public and private. The work was clearly done with a view to helping the passage of legislation being proposed in the USA, however..

Most, if not all the findings made could be extrapolated to every advanced democracy around the world.

If you are familiar with this field, much of what has been written will not be new, as we have been calling for the sorts of measures that are proposed in the report since at least 2002. That does not mean that the authors haven’t made a valuable contribution, because they have made recommendations about how to solve the problem. Specifically they recommend removing legislative impediments to sharing whilst maintaining protections on personal information.

According to the authors: From October 2011 through February 2012, over 50,000 cyber attacks on private and government networks were reported to the Department of Homeland Security (DHS), with 86 of those attacks taking place on critical infrastructure networks. As they rightly point out, the number reported represents a tiny fraction of the total occurrences.

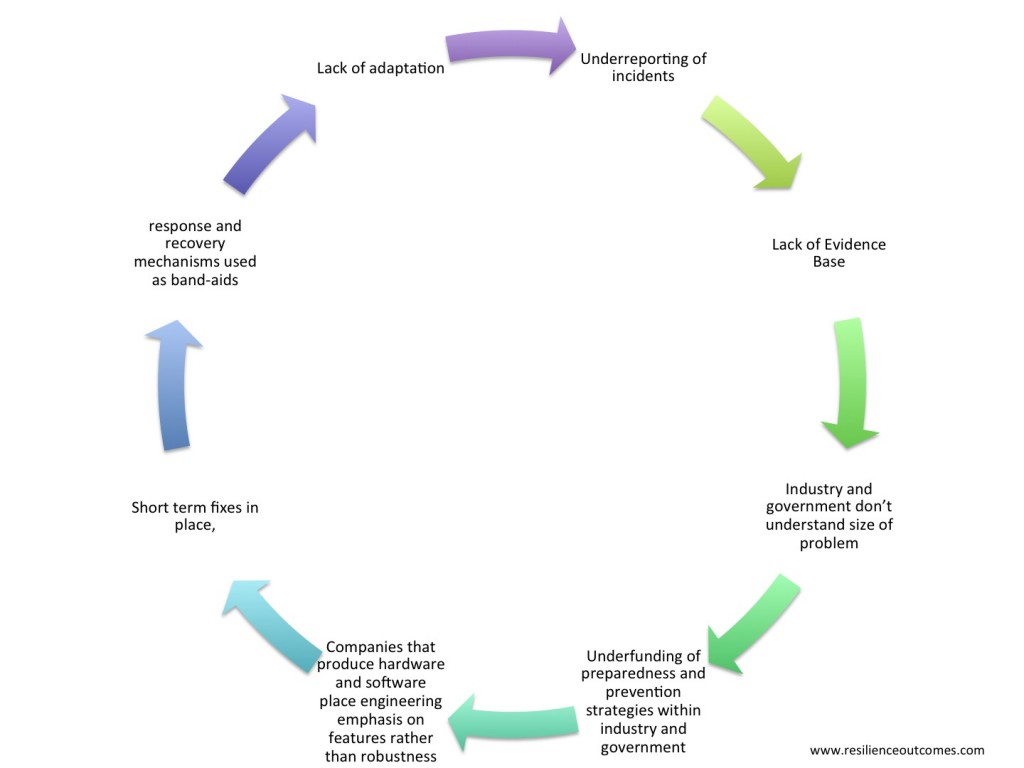

As is the case in many areas of security, the lack of an evidence base is at the core of the problem, because it creates a cycle where there is resistance to change and adaptation to fix the problems efficiently and effectively.

Of course, the other thing that happens is that organisations don’t support an even level of focus or resourcing on the problem, because, most of the time, like an iceberg, the bit of the problem that you can ‘see’ is comparatively small.

Of course, the other thing that happens is that organisations don’t support an even level of focus or resourcing on the problem, because, most of the time, like an iceberg, the bit of the problem that you can ‘see’ is comparatively small.

To make matters worse, new research is telling us that we are optimistically biased when making predictions about the future. That is, people generally underestimate the likelihood of negative events. So without ‘hard’ data, and given the choice of underestimating the size of a problem or overestimating it, humans that make decisions in organisations and governments are likely to underestimate the likelihood of bad things happening. You can find out more about the optimism bias in a talk by Tali Sharot on TED.com

The cost differential to organisations when they don’t build in cyber security, are unable to mitigate risks and then need to recover from cyber attacks is significant. This cost is felt most by the organisations affected, but its effects are passed across an economy.

So what can be done to break this cycle of complacency? Government and industry experts have long spoken about the need for better sharing of information about cyberthreats. I was talking in public fora about this ten years ago.

The devil is in the detail in the ‘what’ and the ‘how’. Inside the ‘what’ is also the ‘who’. I’ll explain below

What should be shared, who should do the sharing – and with whom?

Both government and industry, whilst they generally enthusiastically agree that there should be sharing, think that the other party should be doing more of it and then come up with any number of excuses as to why they can’t! For those who are fans of iconic 80’s TV, it reminds me of the Yes Minister episode where the PM wants to have a policy of promoting women and in cabinet each minister enthusiastically agrees that it should be done, whilst explaining why it wouldn’t be possible in his department. In government, the spooks will tell you that they have ‘concerns’ with sharing, ie they want to spy on other countries and don’t want to give up any potential tools. It’s no better in industry, companies don’t have an incentive to share specific data, because their competitors might get some kind of advantage.

The UK has developed perhaps the most mature approach to this. UK organisations have been subject to a number of significant cyber attacks and government officials attempt to ‘share what is shareable’. The ability to do this may be because of the close relationship between the UK government and industry, developed initially during the time of the Troubles in Ireland and has been maintained in one form or other through the terrorism crises of this Century. It remains to be seen whether the government will be able to maintain these relationships and UK industry will see value in them as the UK and Europe struggle with short-termism brought on by the fiscal situation.

Australia has also attempted to share what is shareable, however as the government computer emergency response team sits directly within a department of state this is very difficult. It seems that the CERT does not have a clear mission. Is it an arbiter of cyber-policy and information disseminator, or an operational organisation that facilitates information exchange on cyber issues between government and industry?

This quandary has not been solved completely by any G20 country. Indeed, it will never be solved, it is a journey without end. It is possible that New Zealand has come closest, but this seems to be because of the small size of the country and the ability to develop individual relationships between key people in industry and government. Another country that is doing reasonably well is South Korea – mainly because it has to, it has the greatest percentage of broadband users of any country and North Korea just a telephone line away. The Korean Internet security agency – KISA brings together industry development, Internet policy, personal information protection, government security, incident prevention and response under one umbrella.

For larger countries, I am of the view that a national CERT should be a quasi-government organisation that is controlled by a board comprised of:

- companies that are subject to attack (including critical infrastructure);

- network providers;

- government security and

- government policy agencies.

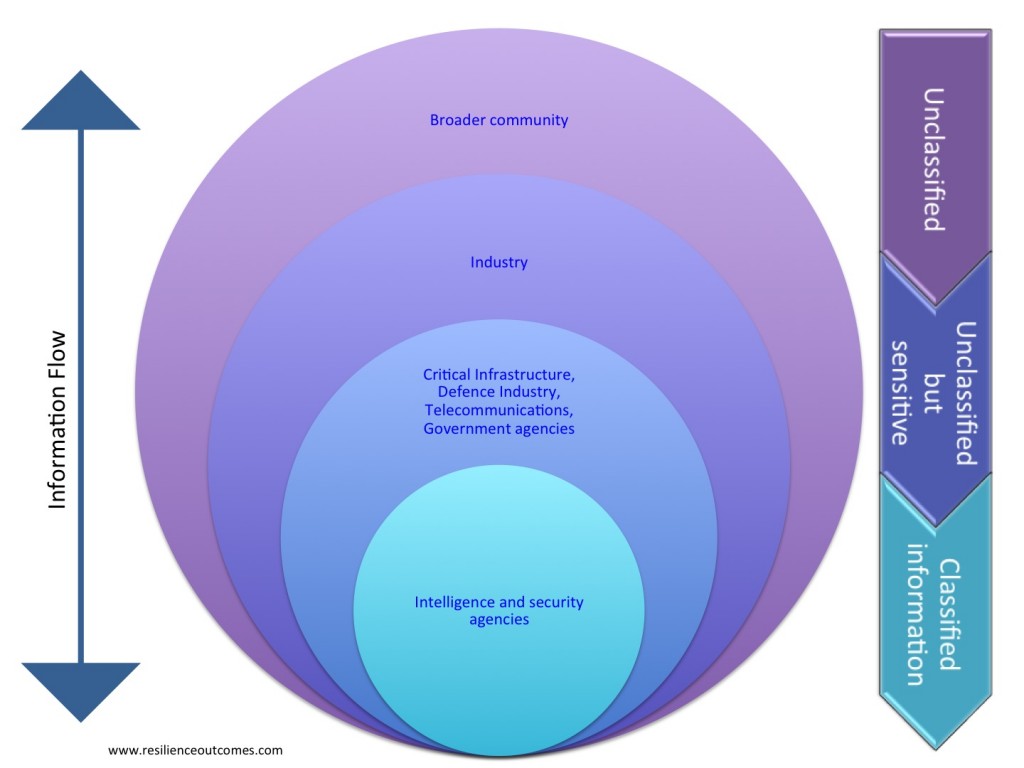

In this way, the CERT would strive to serve the country more fully. There would be more incentive from government to share information with industry and industry to share information with government. With this template, it is possible to create a national cyber-defence strategy that benefits all parts of the society and provides defence-in-depth to those parts of the community that we are most dependent on, ie the critical infrastructure and government.

Ensuring two-way information flow within the broader community and with industry has the potential to provide direct benefits for national cyber-security and for the community more broadly. Firstly, by helping business and the community to protect itself. Secondly, for government, telecommunications providers and the critical infrastructure in the development of sentinel systems in the community, which like the proverbial canary in the coalmine, signal danger if they are compromised. Thirdly, by improving the evidence base through increased quality and quantity of incident reporting – which is so often overlooked.

Governments can easily encourage two-way communication by ‘sharing first’. Industry often questions the value of information exchanges, because they turn up to these events at their own expense and some government bigwig opens and says ‘let there be sharing’ and then there is silence, because the operatives from the three letter security agencies don’t have the seniority to share anything and the senior ones don’t understand the technical issues. I am not the first person to say that in many cases (I think 90%), technical details that can assist organisations to protect their networks do not need to include the sensitive ‘sources and methods’ discussion. By that I mean, if a trust relationship exists or is developed between organisations in government and industry and one party passes a piece of information to the other and says “Do x and be protected from y damage”, then the likelihood of the receiving party to undertake the action depends on how much they trust the provider. Sources and methods information are useful to help determine trustworthiness, but they are not intrinsically essential (usually) to fixing the problem.

As the Public-Private Information Sharing report suggests, many of the complex discussions about information classification/ over-classification and national security clearances can be left behind. Don’t get me wrong; having developed the Australian Government’s current protective security policy framework, I think there is a vital place for security clearances and information classification. However, I think that it is vastly over-rated in a speed of light environment where the winner is not the side with the most information, but the side that can operationalise it most quickly and effectively. Security clearances and information classification get in the way of this and potentially deliver more benefit to the enemy by stopping the good guys from getting the right information in time. We come back to the question of balancing confidentiality, integrity and availability – the perishable nature of sensitive information is greater than ever.

How should cyber threat information be shared?

This brings me to the next area of concern. There is also a problem with how information is shared between industry and government, or more importantly the speed with which it is shared. In an era when cyber attacks are automated, the defence systems are still primarily manual and amazingly, in some cases rely on paper based systems to exchange threat signatures. There is an opportunity for national CERTs to significantly improve the current systems to share unclassified information about threats automatically. Ideally these systems would be designed so that threat information goes out to organisations from the national CERT and information about possible data breaches returns immediately to be analysed.

Of course, the other benefit of well-designed automated systems could be that they automatically strip customer private information out of any communications, as with the sources and methods info, peoples’ details are not important (spear phishing being an exception). In most cases, I’d rather have a machine automatically removing my private details than some representative of my ‘friendly’ telecommunications provider or other organisation.

These things are all technically possible, the impediments are only organisational. Isn’t it funny, people are inherrently optimistic, but don’t trust each other. Its surprising civilisation has got this far.

References

1How Dopamine Enhances an Optimism Bias in Humans. Sharot, T; Guitart-Masip, M; Korn, c; Chowdhury, R; Dolan, R. CURRENT BIOLOGY. July 2012. www.cell.com

Recently seen here