On the face of it, complex systems might have more resilience than those that are simple because they can have more safeguards built-in and more redundancy.

However, this is not supported by real world observation. Simply put, more complexity means more things can go wrong. In both nature and in human society, complex controls work well at maintaining systems within tight tolerances and in expected scenarios. However complex systems do not work well when they have to respond to circumstances which fall outside of their design parameters.

In the natural world, one place where complex systems fail is the immune system. Anaphylactic shock, where the body over-reacts because of an allergy to a food such as peanuts is a good example. Peanuts are of course, not pathogens, they are food, The immune system should not react to them. However people’s immune systems are made up of a number of complex systems built over the top of each other over many millions of years of evolution. One of these systems is particularly liable to overreact to peanuts. This causes in the worst case, death through anaphylaxis – effectively the release of chemicals which are meant to protect the body, but which do exactly the opposite. This is an example of where a safety system has become a vulnerability when it is engaged outside normal parameters.

We are beginning to see the resilience of complex systems such as the Great Barrier Reef severely tested by climate change. Researchers have found that the reef is made of complex interactions between sea fauna and flora, built upon other more complex interactions. This makes it nigh on impossible for researchers to find exact causes for particular effects, because they are so many and varied. Whilst the researchers confidently can say that climate change is having a negative effect on the coral and that bleaching effects will become more common as the climate becomes warmer, they cannot say with a great deal of certainty how great the other compounding effects such as excess nutrients from farm runoff or removal of particular fish species might be. This is not a criticism of the science, but more an observation that to predict the future with absolute certainty, when there are multiple complex factors at play is extremely difficult.

These natural systems are what some might call ‘robust yet fragile’. Within their design parameters they are strong and have longevity. Such systems tend to be good at dealing with anticipated events such as cyclones in the case of the Great Barrier Reef. However, when presented with particular challenges outside the standard model, they can fail.

Social systems and machines are not immune from the vulnerabilities that complexity can introduce into systems and can also be strong in some ways and brittle in others.

The troubles with the global financial system are a good example. Banking has become very complex and banking regulation has kept up with this trend. That might seem logical, but the complex rules may in themselves be causing people to calibrate the financial system to meet the rules, focussing on the administrivia of their fine print, rather than the broad aims that the rules were trying to achieve. As an example, one important set of banking regulations are the Basel regulations. The Basel 1 banking regulations were 30 pages long, the Basel 2 regulations were 347 pages long and the Basel 3 regulations are 616 pages. One estimate by McKinsey says that compliance for a mid-sized bank might cost as much as 200 jobs. If a bank needs to employ 200 people to cope with increased regulation, then the regulator will need some number of employees to keep up with the banks producing more regulatory reports, and so the merry-go-round begins!

A British banking regulator, Andrew Haldane is now one of a number of people who question whether this has gone too far and banks and banking regulation has become too complex to understand. In an interesting talk he gave in 2012 in Jackson Hole, Wyoming, USA titled the ‘Dog and the Frisbee’, Haldane uses the analogy of a dog catching a frisbee to suggest that there are hard ways and easy ways to work out how to catch a frisbee. The hard way involves some complex physics and the easy way involves using some simple rules that dogs use. Haldane points out that dogs are better in general at catching frisbees than physicists! I would also suggest that the chances of predicting outlier events, what Nicolas Taleb calls ‘Black Swans’ is greater using the simple predictive model.

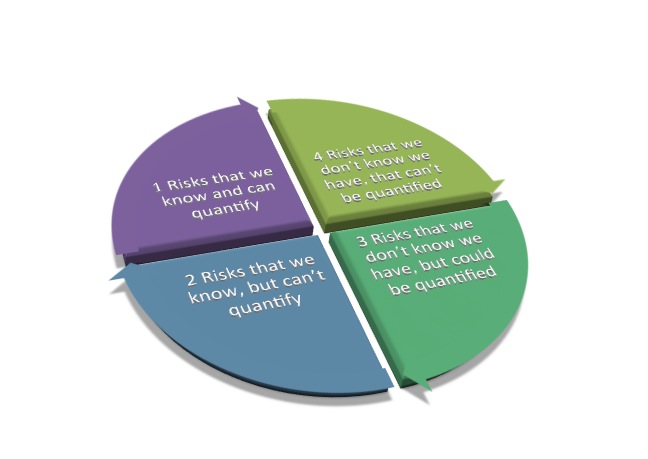

This is in some ways a challenge to the traditional thinking behind risk modelling. When I did my risk course, it was all very formulaic. List threats, list vulnerabilities and consequences, discuss tolerance for risk, develop controls, monitor etc. I naively thought that risk assessment would save the world. But it can’t. Simple risk management just can’t work in a complex system. Firstly, it is impossible to identify all risks. To (misquote) Donald Rumsfeld, there are known risks, unknown risks, risks that we know we have, but can’t quantify and unknown risks that we can neither quantify nor know.

Added to this is the complex interaction between risks and the observation that elements of complex systems under stress can completely change their function (for better or worse). An analogy might be where one city under stress spontaneously finds that its citizens begin looting homes and another intensifies its neighbourhood watch program.

Thus risk assessment of complex systems is in itself risky. In addition, in a complex system, the aim is homeostasis, the risk model responds to each raindrop-sized problem, correcting the system minutely so there are minimal shocks and the system can run as efficiently as possible. A resilience approach might try to develop ways to allow the system/organisation/community to be presented with minor shocks, in the hope that when the black swan event arrives, the system has learnt to cope with at least some ‘off white’ events!

Societies are also becoming more complex. There are more interconnected yet separately functioning parts of a community than there were in the past. This brings efficiency and speed to the ways that things are done within the community when everything is working well. However when there is a crisis, there are more points of failure. If community B is used to coping without electricity for several hours a day, they develop ways to adapt over several months and years. If that community then finds that they have no power for a week, they are more prepared to cope than community A that has been able to depend on reliable power. Community B is less efficient than community A, but it is also less brittle.

This does however illustrate out a foible of humanity. Humans have evolved so that they are generally good at coping with crises (some better than others), however they are not good at dealing with creeping catastrophes such as climate change, systemic problems in the banking and finance sector, etc.

Most people see these things as problems, but think that the problems are so far away that they can be left whilst other more pressing needs are dealt with.

Sometimes you just need a good crisis to get on and fix long-term complex problems. Just hope the crisis isn’t too big.

Recently seen here